Top Useful ML Practices For Python Developers

Just because we can make models doesn’t mean we are gods. It doesn’t give us the freedom to write crap code.

Since my start, I have made tremendous mistakes and thought of sharing what I see to be the most common skills for ML engineering. In my opinion, it’s also the most lacking skill in the industry right now.

I call them software-illiterate data scientists because a lot of them are non-CS coursera baptised engineers. And, I myself have been that 😅

If it came to hiring between a great data scientist and a great ML engineer, I will hire the later.

Let’s get started.

1. Learn to write abstract classes

Once you start writing abstract classes, you will know how much clarity it can bring to your codebase. They enforce the same methods and method names. If many people are working on the same project, everyone starts making different methods. This can create unnecessary and unproductive chaos.

import os

from abc import ABCMeta, abstractmethod

class DataProcessor(metaclass=ABCMeta):

"""Base processor to be used for all preparation."""

def __init__(self, input_directory, output_directory):

self.input_directory = input_directory

self.output_directory = output_directory

@abstractmethod

def read(self):

"""Read raw data."""

@abstractmethod

def process(self):

"""Processes raw data. This step should create the raw dataframe with all the required features. Shouldn't implement statistical or text cleaning."""

@abstractmethod

def save(self):

"""Saves processed data."""

class Trainer(metaclass=ABCMeta):

"""Base trainer to be used for all models."""

def __init__(self, directory):

self.directory = directory

self.model_directory = os.path.join(directory, 'models')

@abstractmethod

def preprocess(self):

"""This takes the preprocessed data and returns clean data. This is more about statistical or text cleaning."""

@abstractmethod

def set_model(self):

"""Define model here."""

@abstractmethod

def fit_model(self):

"""This takes the vectorised data and returns a trained model."""

@abstractmethod

def generate_metrics(self):

"""Generates metric with trained model and test data."""

@abstractmethod

def save_model(self, model_name):

"""This method saves the model in our required format."""

class Predict(metaclass=ABCMeta):

"""Base predictor to be used for all models."""

def __init__(self, directory):

self.directory = directory

self.model_directory = os.path.join(directory, 'models')

@abstractmethod

def load_model(self):

"""Load model here."""

@abstractmethod

def preprocess(self):

"""This takes the raw data and returns clean data for prediction."""

@abstractmethod

def predict(self):

"""This is used for prediction."""

class BaseDB(metaclass=ABCMeta):

""" Base database class to be used for all DB connectors."""

@abstractmethod

def get_connection(self):

"""This creates a new DB connection."""

@abstractmethod

def close_connection(self):

"""This closes the DB connection."""

2. Fix your seed at the top

Reproducibility of experiments is a very important thing and seed is our enemy. Catch hold of it otherwise it leads to different splitting of train/test data and different initialisation of weights in the neural network. This leads to inconsistent results.

def set_seed(args):

random.seed(args.seed)

np.random.seed(args.seed)

torch.manual_seed(args.seed)

if args.n_gpu > 0:

torch.cuda.manual_seed_all(args.seed)

fix_seed.py

3. Get started with a few rows

If your data is too big and you are working in the later part of the code like cleaning data or modeling, use nrows to avoid loading the huge data every time. Use this when you want to only test code and not actually run the whole thing.

This is very applicable when your local PC config is not enough to work with the datasize but you like doing development on local on Jupyter/VS code/Atom.

df_train = pd.read_csv(‘train.csv’, nrows=1000)

4. Anticipate failures(sign of a mature developer)

Always check for NA in the data because these will cause you problem later. Even if your current data doesn’t have doesn’t mean it will not happen in the future retraining loops. So keep it anyways 😆

print(len(df))

df.isna().sum()

df.dropna()

print(len(df))

5. Show the progress of processing

When you are working with bigdata, it definitely feels good to know how much time is it going to take and where are we in the whole processing.

Option 1 — tqdm

from tqdm import tqdm

import time

tqdm.pandas()

df['col'] = df['col'].progress_apply(lambda x: x**2)

text = ""

for char in tqdm(["a", "b", "c", "d"]):

time.sleep(0.25)

text = text + char

progress_bar_tqdm.py

Option 2 — fastprogress

from fastprogress.fastprogress import master_bar, progress_bar

from time import sleep

mb = master_bar(range(10))

for i in mb:

for j in progress_bar(range(100), parent=mb):

sleep(0.01)

mb.child.comment = f'second bar stat'

mb.first_bar.comment = f'first bar stat'

mb.write(f'Finished loop {i}.')

progress_bar.py

6. Pandas can be slow

If you have worked with pandas, you know how slow it can get some times — especially groupby. Rather than breaking our heads to find ‘great’ solutions for speedup, just use modin by changing one line of code.

import modin.pandas as pd

7. Time the functions

Not all functions are created equal.

Even if the whole code works doesn’t mean you wrote a great code. Some soft-bugs can actually make your code slower and it’s necessary to find them. Use this decorator to log time of functions.

import time

def timing(f):

"""Decorator for timing functions

Usage:

@timing

def function(a):

pass

"""

@wraps(f)

def wrapper(*args, **kwargs):

start = time.time()

result = f(*args, **kwargs)

end = time.time()

print('function:%r took: %2.2f sec' % (f.__name__, end - start))

return result

return wrapper

function_time.py

8. Don’t burn money on cloud

Nobody likes an engineer who wastes cloud resources.

Some of our experiments can run for hours. It’s difficult to keep a track of it and shutdown the cloud instance when it’s done. I have made mistakes myself and have also seen people leaving instances on for days.

This happens when we work on Fridays and leave something running and realise it on Monday 😆

Just call this function at the end of execution and your ass will never be on fire again!!!

But wrap the main code in try and this method again in except as well — so that if an error happens, the server is not left running. Yes, I have dealt with these cases too 😅

Let’s be a bit responsible and not generate CO2. 😅

import os

def run_command(cmd):

return os.system(cmd)

def shutdown(seconds=0, os='linux'):

"""Shutdown system after seconds given. Useful for shutting EC2 to save costs."""

if os == 'linux':

run_command('sudo shutdown -h -t sec %s' % seconds)

elif os == 'windows':

run_command('shutdown -s -t %s' % seconds)

9. Create and save reports

After a particular point in modeling, all great insights come only from error and metric analysis. Make sure to create and save well formatted reports for yourself and your manager.

Anyways, management love reports right? 😆

import json

import os

from sklearn.metrics import (accuracy_score, classification_report,

confusion_matrix, f1_score, fbeta_score)

def get_metrics(y, y_pred, beta=2, average_method='macro', y_encoder=None):

if y_encoder:

y = y_encoder.inverse_transform(y)

y_pred = y_encoder.inverse_transform(y_pred)

return {

'accuracy': round(accuracy_score(y, y_pred), 4),

'f1_score_macro': round(f1_score(y, y_pred, average=average_method), 4),

'fbeta_score_macro': round(fbeta_score(y, y_pred, beta, average=average_method), 4),

'report': classification_report(y, y_pred, output_dict=True),

'report_csv': classification_report(y, y_pred, output_dict=False).replace('\n','\r\n')

}

def save_metrics(metrics: dict, model_directory, file_name):

path = os.path.join(model_directory, file_name + '_report.txt')

classification_report_to_csv(metrics['report_csv'], path)

metrics.pop('report_csv')

path = os.path.join(model_directory, file_name + '_metrics.json')

json.dump(metrics, open(path, 'w'), indent=4)

make_reports.py

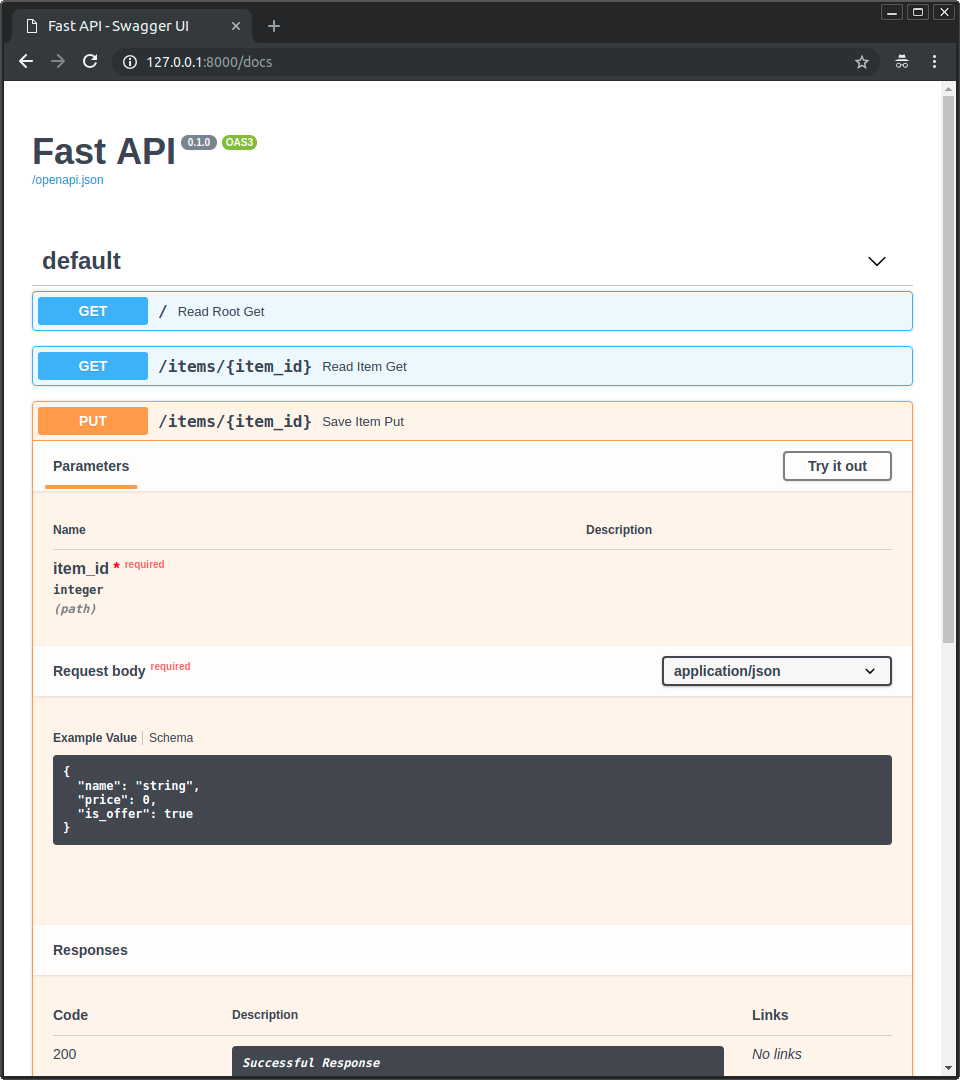

10. Write great APIs

All that ends bad is bad.

You can do great data cleaning and modeling but still you can create huge chaos at the end. My experience with people tells me many are not clear about how to write good APIs, its documentation and server setup. I will be writing another post on this soon but let me get you started.

The below is good methodology for a classical ML and DL deployment under not too high load — like 1000/min.

Meet the combo — Fastapi + uvicorn + gunicorn

- Fastest — Write the API in fastapi because its the fastest as per this and the reason is explained here.

- Documentation — Writing API in fastapi gives us free documentation and test endpoints at http:url/docs which is autogenerated and updated by fastapi as we change the code

- Workers — Deploy the API using gunicorn server because gunicorn has the functionality to start more than 1 worker and you should keep atleast 2.

Run these command to deploy using 4 workers. Optimise number of workers by load testing.

pip install fastapi uvicorn gunicorn

gunicorn -w 4 -k uvicorn.workers.UvicornH11Worker main:app

Conclusion

I hope you had a good time reading this. Please hit like if you enjoyed it and share with friends who can benefit as well 😃

Suggest:

☞ Python Tutorials for Beginners - Learn Python Online

☞ Learn Python in 12 Hours | Python Tutorial For Beginners

☞ Complete Python Tutorial for Beginners (2019)

☞ Python Programming Tutorial | Full Python Course for Beginners 2019